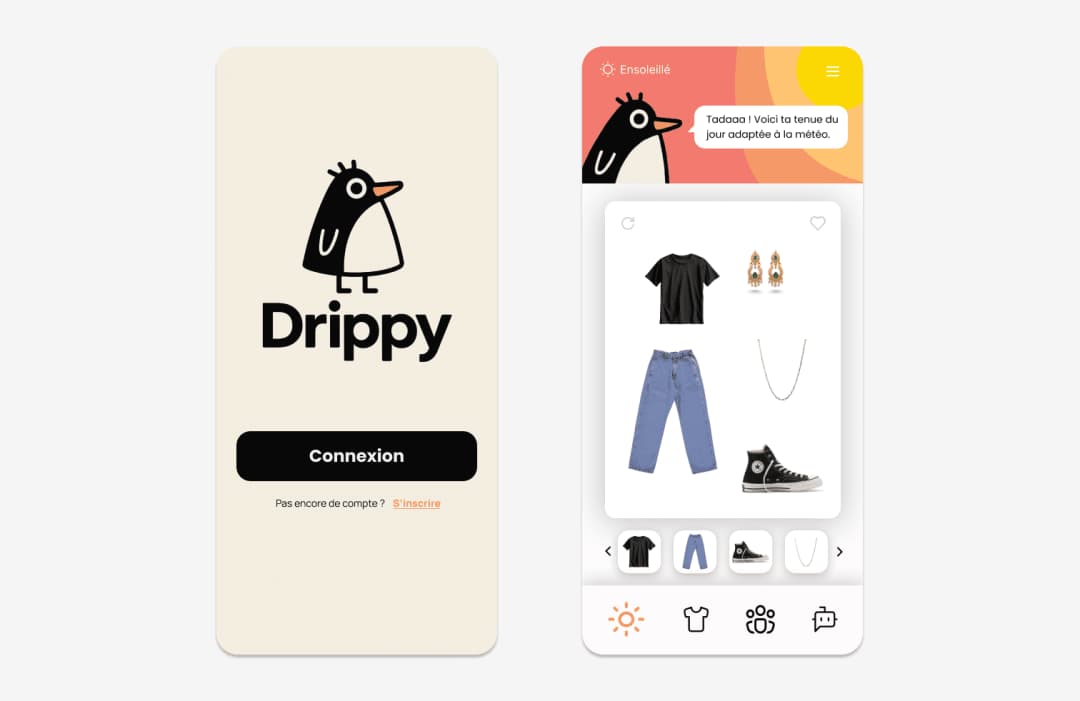

Outfit structure generation pipeline using LLM augmented data.

Drippy is a Python-based proof of concept for building fully local inference pipelines using Ollama. It features two primary pipelines—data integration and recommendation—each organized in its own module. The project also includes Jupyter notebooks for exploratory analysis and a complete end-to-end demonstration. Setup is simple: clone the repository, install Ollama, configure a virtual environment, and install the necessary dependencies. A demonstration video showcases the pipelines in action.

Created by Paul Louppe, Drippy is an experimental framework designed to demonstrate how modular pipelines can be built for machine-aided data processing and recommendations, powered entirely by local large language models (LLMs) through Ollama. By avoiding reliance on cloud-based APIs, Drippy prioritizes privacy, offline operability, and user control over the full data lifecycle—from ingestion and transformation to inference and output.

Location: pipeline_data_integration/

This module manages the end-to-end preparation of data, including:

Loading raw datasets from the data/ directory

Applying data augmentation methods such as synthetic sampling and feature engineering

Preprocessing steps including normalization and serialization for downstream tasks

Location: pipeline_recommendation/

This pipeline is focused on generating intelligent recommendations using local LLMs:

Prompt templates designed for retrieval-augmented generation (RAG)

Query orchestration logic using Ollama’s local model serving

Post-processing stages for filtering, ranking, and formatting outputs

exploratory_notebook.ipynb – An interactive workspace to test data flows and prompt formulations

poc_notebook.ipynb – A guided, step-by-step demonstration of the full pipeline, from raw input to final recommendation

Demonstration Video:

Watch the proof-of-concept demo

Drippy provides a concise yet powerful template for designing modular, local pipelines using large language models. Whether your focus is on data engineering, prompt experimentation, or recommendation systems, Drippy offers a robust starting point for privacy-conscious and offline-first AI development.